We built an AI orchestration layer. It cut our token costs by 40-70% per request and doubled response speed. Most teams don't do this. They throw everything at GPT-4 or Claude Opus. Both approaches are expensive. Neither is aligned with what the task actually needs.

Here's what we learned — and what changed when we evolved our approach.

The Problem: Misaligned Models

Single-model AI pipelines make sense on the surface. One model handles everything. Simple. Reliable. Predictable costs.

Except it's not predictable. It's wasteful.

Consider a real request from our system: "What's our current MRR?" This is a data lookup. No reasoning. No creativity. No multi-step logic. Just execute a script, pull the database row, return it. We were sending it to Claude Opus.

Opus took 45 seconds and burned through 8,000 tokens. A pre-built script does it in 3 seconds with zero tokens.

Now imagine that across 100 requests per day. You're bleeding money on capability you don't need.

On the flip side — a task like "build a client health dashboard with real-time scoring" goes to a lightweight model? You get shallow output and broken code. Wrong tool for the job.

This is the hidden problem with single-model approaches: you're either overpaying for simple work or underpaying for complex work. And your average cost is always pulled toward the most expensive model in your stack.

Our Solution: A Four-Tier Orchestration Layer

Early on, we tried the obvious approach: use a cheap model like Haiku to classify incoming requests, then route to the right model. It worked, but we realized something better — the orchestrator itself can handle most of the work.

Our current system uses Claude Sonnet as both the orchestrator and the primary workhorse. It sits in the main session, evaluates every request, and makes a decision: handle it directly, execute a script, or spawn a specialist sub-agent. Sonnet handles over 80% of requests without ever needing another model.

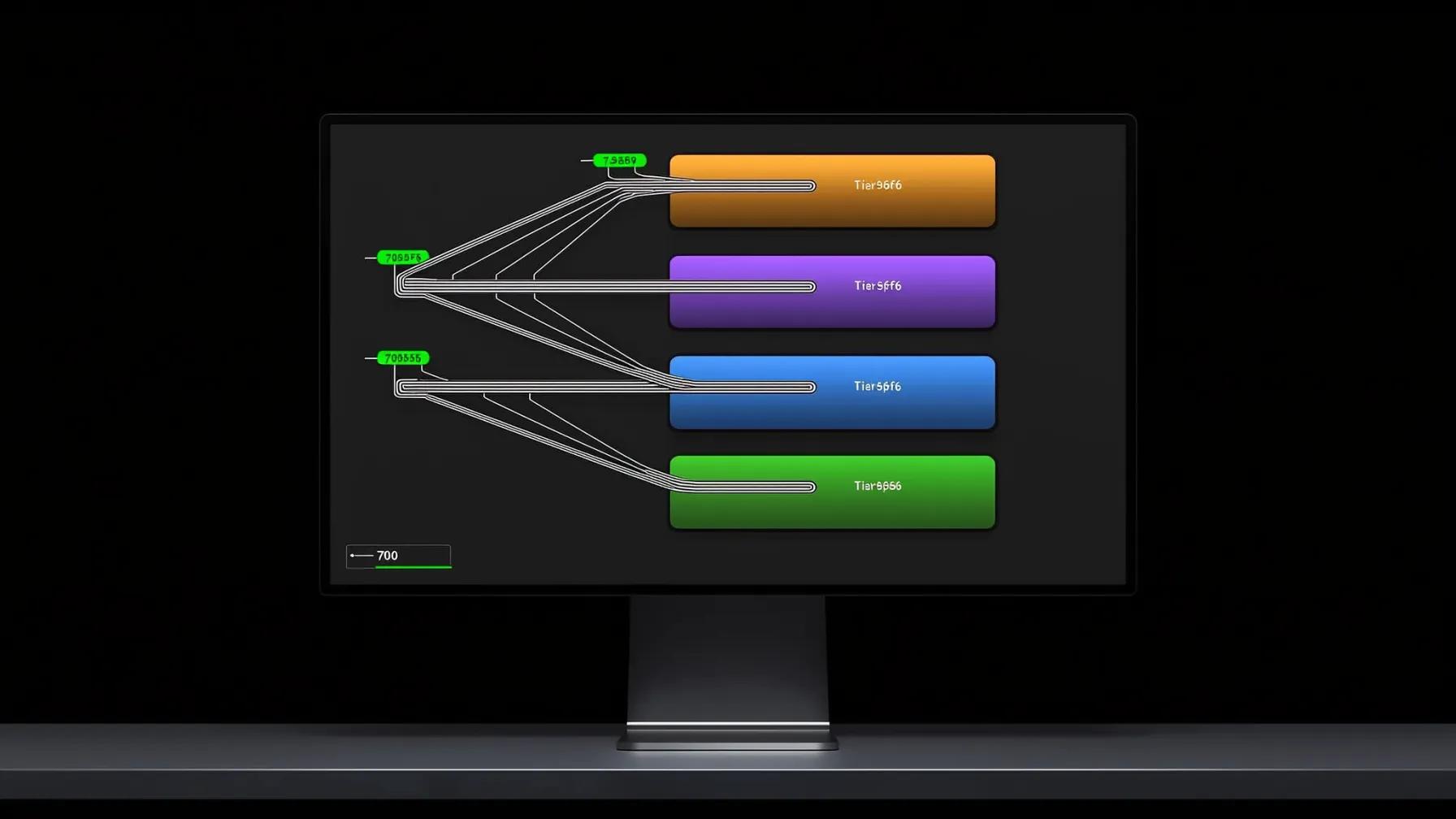

The decision tree has four tiers.

Tier 0: Script Execution — No LLM at All (40% of traffic)

This is the biggest cost saver and most teams miss it entirely. We built a library of 66 pre-built shell scripts covering every service integration we use: Stripe, GitHub, Supabase, Vercel, Netlify, Jira, Slack, Zoom, Twilio, and more.

When Sonnet recognizes a request as a service query — "What's our MRR?", "List open PRs", "Show upcoming Zoom meetings" — it doesn't reason through the API. It runs a script. Zero reasoning tokens. Deterministic output. No hallucination.

Result: 3-5 seconds, 3-5k tokens total (just Sonnet's routing overhead). We were spending 8,000+ on Opus for the same answers.

Tier 1: Sonnet Direct — The Workhorse (40% of traffic)

Research, analysis, content writing, memory lookups, skill execution — Sonnet handles all of this directly in the main session. No sub-agent needed. No spawn overhead.

This is where the "orchestrator as workhorse" insight pays off. Instead of Sonnet spending tokens to classify and route, it just… does the work. A company research request, a GA4 analysis, a blog draft, a Slack thread summary — Sonnet processes these in 5-12k tokens with quality that rivals Opus for non-coding tasks.

Result: 10-30 seconds, 5-12k tokens. Fast enough for interactive use. Good enough that users don't notice it's not the top-tier model.

Tier 2: Opus Sub-Agents — Heavy Lifting (15% of traffic)

When the request requires actual code generation, architecture decisions, complex debugging, or building new features — Sonnet spawns a Claude Opus sub-agent. Opus is expensive. It's also irreplaceable for this class of work.

The key optimization: context pruning. We don't hand Opus our entire codebase. Sonnet loads only the relevant skill file, project memory, and component registry. Sub-agent context stays under 15k tokens instead of the 27k+ that a naive approach would produce.

Result: 60-120 seconds, 12-18k tokens (2-3k orchestration + 10-15k Opus). Quality is non-negotiable here. Every coding task gets the best model.

Tier 3: Two-Stage Pipelines — Content to Implementation (5% of traffic)

Some requests need both content expertise and implementation skill. A marketing blog post, for example. Sonnet writes excellent prose but shouldn't build the HTML structure from scratch. Opus builds excellent HTML but you don't want to pay Opus rates for writing drafts.

Our solution: a two-stage pipeline. Stage 1: Sonnet writes the content (blog copy, research, analysis). Stage 2: Opus implements it into the site's component structure, design system, and deployment pipeline.

Result: Total cost ~$0.14 per blog post. Sonnet handles 8-10k tokens of content, Opus handles 8-10k tokens of implementation. Each model does what it's best at.

The Architecture That Makes It Work

Four components power the orchestration layer.

1. Lazy Context Loading

Every request uses a pattern we call Index → Registry → Resource. Instead of loading everything upfront, Sonnet checks an index file, identifies what's relevant, and loads only that resource. Memory files, skill definitions, component docs, project history — all loaded on demand.

This keeps base context under 15k tokens for most requests. When we started, naive context loading was pushing 27k+ tokens before the model even started working. That's tokens you're paying for on every single request. Lazy loading cut our baseline cost by 40%.

2. Script Library (66 scripts, 17 services)

Each service integration is a standalone bash script. Stripe balance? bash scripts/stripe/balance.sh. Open PRs? bash scripts/github/open-prs.sh. Zoom transcripts? bash scripts/zoom/download-transcript.sh.

The scripts handle authentication, pagination, error handling, and output formatting. The LLM's only job is recognizing which script to run. This eliminates an entire class of hallucination — the model never fabricates API responses because it never calls the API.

3. Skill-Based Routing

Complex tasks are handled by skills — self-contained instruction sets that tell the model exactly how to handle a specific type of work. Company research, blog writing, code review, SEO audits — each is a skill with its own context, templates, and quality checks.

When a request matches a skill, Sonnet loads that skill's definition and follows it. Most skills execute directly on Sonnet. Coding skills spawn Opus. The skill system means we can add new capabilities without changing the routing logic.

4. Monitoring and Loop Prevention

Every routing decision is logged. We track token counts per tier, misclassification rates, and failure patterns. If any automated job fails three consecutive times, it's disabled immediately and we get an alert. No runaway costs. No infinite retry loops.

Hourly heartbeat checks (running on Sonnet — cheap enough to run frequently) verify system health, check for stuck tasks, and triage memory files. This costs almost nothing but catches problems before they compound.

The Numbers That Matter

Per-request token reduction: 40-70% depending on request type.

This isn't a lab stat. This is production data from hundreds of daily requests.

Before orchestration: average request cost 18,000 tokens at Opus rates.

After orchestration: average request cost 6,500 tokens, with 40% burning zero reasoning tokens (scripts) and another 40% running on Sonnet instead of Opus.

The cost breakdown by tier:

- Script execution: 3-5k tokens (routing only). Was 8k+ on Opus.

- Sonnet direct: 5-12k tokens. Was 12-18k on Opus for the same tasks.

- Opus coding: 12-18k tokens. Same as before, but now only 15% of traffic instead of 100%.

- Two-stage pipeline: 19-25k tokens total, split across two models at different price points.

Over a week of 500 requests per day, the savings are significant. The exact dollar amount depends on your pricing tier, but we consistently see 60%+ reduction in total AI spend.

Speed Is the Hidden Win

Script queries return in 3-5 seconds. Sonnet responses arrive in 10-30 seconds. Only coding tasks take 60+ seconds — and those are tasks where speed doesn't matter as much as correctness.

The speed improvement changed how we design features. When simple queries return in under 5 seconds, you can build real-time dashboards backed by AI. When research takes 15 seconds instead of 60, it becomes interactive. Speed isn't just a nice metric — it unlocks new product capabilities.

Quality Goes Up, Not Down

This is the counterintuitive part. By using cheaper models for most work, we actually improved average output quality. Why? Because each model is now handling work it's suited for. Sonnet doesn't struggle with code architecture (it doesn't try). Opus doesn't waste cycles on data lookups (it never sees them). Scripts don't hallucinate API responses (they can't).

Task-model alignment means fewer errors, faster iteration, and better results across the board.

The Catch

This isn't free complexity. Orchestration requires upfront investment:

- Script library. Each service integration needs a dedicated script. We have 66 across 17 services. That's real engineering time, but each script pays for itself within a day of use.

- Skill definitions. Complex tasks need structured instructions. Writing good skills takes iteration — the first version is never the final version.

- Context discipline. Lazy loading only works if you maintain clean indexes. Every new component, script, or skill must be registered immediately. If it's not in the index, it doesn't exist to future sessions.

- Monitoring. You need to track routing decisions, token spend, and failure rates. Without feedback loops, your orchestration layer drifts.

For teams running LLMs at scale — dozens to hundreds of requests per day — this investment pays back within the first week. For hobby projects or low-volume use cases, a single model is fine.

What This Means For Your Team

If you're running everything through one model, you're overpaying by 40-60%. The fix isn't complicated, but it does require a mindset shift: stop thinking of your AI as a single model and start thinking of it as a system with specialized components.

Start with the highest-impact change: pull service queries out of your LLM entirely. Build scripts. This alone will cut 30-40% of your token spend because these are your most frequent, lowest-complexity requests.

Then evaluate whether your orchestrator can handle more work directly. Most teams over-route to expensive models. If Sonnet (or GPT-4o-mini, or whatever your mid-tier model is) can handle 80% of your traffic, let it. Save the heavy model for the 15-20% that genuinely needs it.

The payback is immediate. The first week saves money. After a month, you'll wonder why you ever ran everything through one model.

TL;DR: We cut AI costs by 60%+ with a four-tier system: scripts for service queries (zero LLM tokens), Sonnet as orchestrator + workhorse (80% of traffic), Opus for coding only (15%), and two-stage pipelines for content-to-implementation. Single-model is the new monolith — it works until it doesn't scale.

Running AI at scale? Let's compare notes on your orchestration setup.