Every AI consultancy can build a demo. The demo always works. It's compelling, polished, and ships in two weeks. Then you sign a six-figure contract, and six months later you're staring at a system that falls over under real load, hallucinates in front of customers, and costs 10x what anyone budgeted.

This isn't hypothetical. According to a 2024 RAND Corporation study, more than 80% of AI projects fail to reach meaningful production deployment — twice the failure rate of non-AI IT projects. And McKinsey's 2025 State of AI report found that while 88% of organizations now use AI in at least one function, only about one-third have managed to scale it beyond pilots.

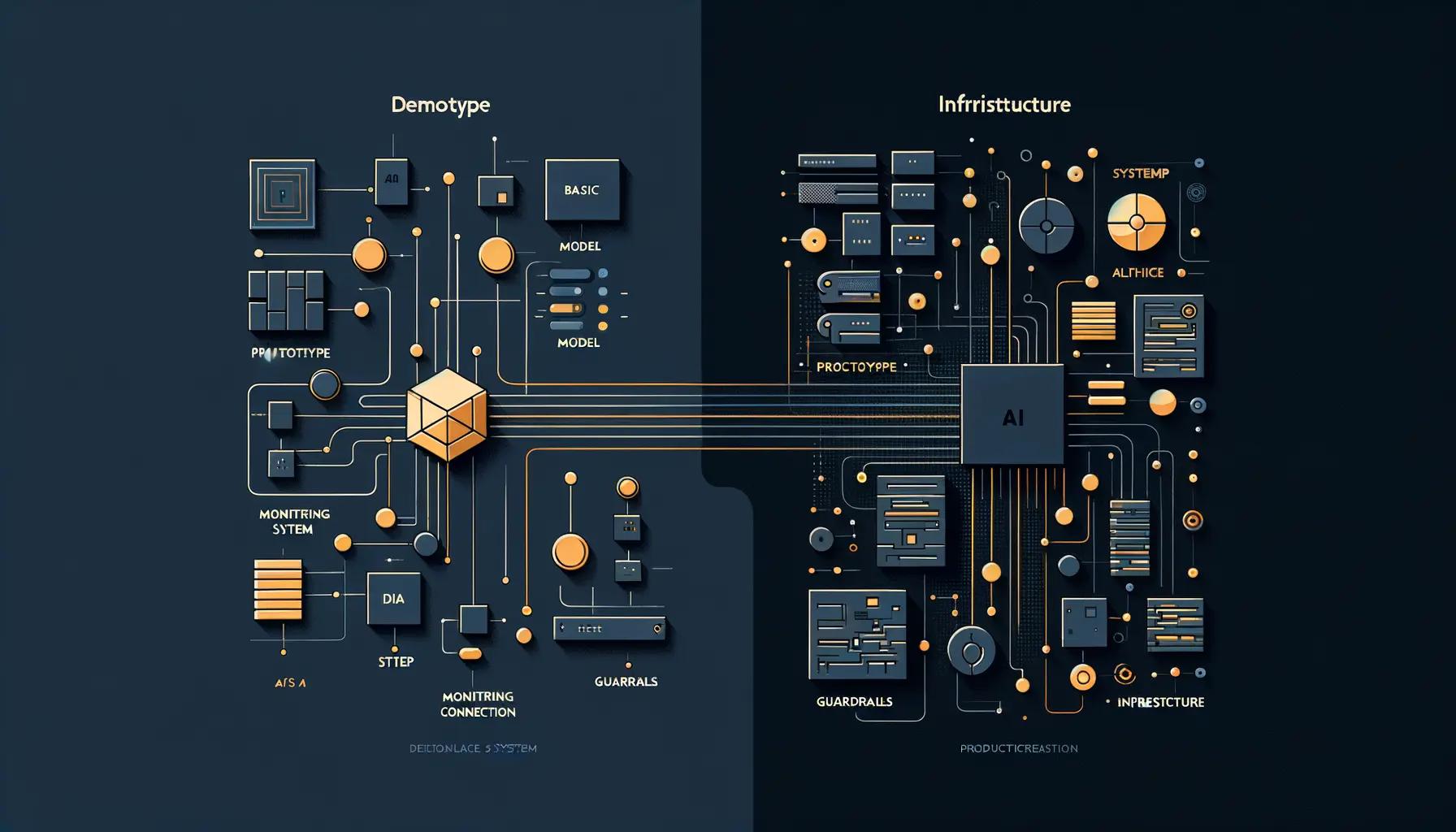

The gap between "we built a cool prototype" and "this runs in production, reliably, at scale" is where most AI engagements go to die. Here's how to tell whether a consultancy can actually cross that gap — before you hand them the keys.

1. Ask What They've Broken, Not Just What They've Built

Any consultancy will show you a portfolio of successes. That tells you almost nothing. What you want to hear about is failure — specifically, production failures they've encountered and how they handled them.

A team that's actually shipped AI to production will have war stories about:

- Hallucinations that reached end users — and the guardrails they built afterward

- Cost runaway — an agent that looped and burned through thousands in API calls overnight

- Model regression — a provider update that silently degraded output quality

- Data pipeline failures — stale embeddings, broken ingestion, schema drift

If a consultancy can't articulate specific production failures they've navigated, they haven't done production work. Full stop. The RAND study identified five root causes of AI project failure, including inadequate infrastructure to deploy completed models and misalignment between the problem and the technology. These aren't theoretical risks — they're the daily reality of production AI.

2. Probe Their Architecture Thinking

Prototype architecture and production architecture are fundamentally different. A prototype calls an LLM API and returns the result. A production system needs:

- Fallback chains: What happens when the primary model is down or rate-limited? Do they have model failover strategies?

- Output validation: Every LLM response should pass through deterministic validation before it touches your data or users. If they're not doing this, walk away.

- Circuit breakers: Borrowed from microservices — if an AI component fails N times, it should degrade gracefully, not take down the entire system.

- Audit trails: Every prompt, every model response, every tool call logged to immutable storage. This isn't optional for enterprise — it's a compliance requirement.

- Cost controls: Hard token budgets per task, per user, per day. Without these, a single runaway agent can blow through your monthly budget in hours.

Ask them to whiteboard the architecture for a system they've deployed. If the diagram is just "user → API → LLM → response," they're a prototype shop.

3. Check Their Stance on Evaluation and Testing

Traditional software has well-established testing patterns. AI systems are harder to test because they're non-deterministic — the same input can produce different outputs. A production-ready consultancy will have a clear answer to: "How do you test AI systems?"

Look for:

- Eval suites: Curated sets of test cases that run against every model change, prompt update, or system modification. Not just "does it work?" but "does it still work the same way?"

- Regression testing: When you update a prompt or swap a model, how do you know you didn't break 15 other things?

- Human-in-the-loop review: For high-stakes outputs, there should be a sampling and review process, not blind trust in the model.

- A/B testing frameworks: The ability to route a percentage of traffic to a new model or prompt and compare outcomes before full rollout.

If their testing strategy is "we try it and see if it works," that's a prototype mindset.

4. Evaluate Their Monitoring and Observability Story

Standard APM tools (Datadog, New Relic) aren't enough for AI systems. You need AI-specific observability. Ask what they monitor in production:

- Token consumption per task — a sudden spike means something is looping or over-reasoning

- Latency distributions (P50, P95, P99) — averages hide the pain; the tail is where users rage-quit

- Completion rates — what percentage of AI tasks reach a successful end state vs. timeout, error, or escalation?

- Cost per transaction — do they know their unit economics? Can they tell you what a single AI-powered interaction costs?

- Output quality scores — are they tracking quality over time, or just hoping the model stays good?

A consultancy that can't tell you their monitoring stack and the specific metrics they track hasn't operated AI in production at meaningful scale.

5. Ask About Their Relationship with Model Providers

Production AI means being at the mercy of model providers — OpenAI, Anthropic, Google, and others. Models get updated, deprecated, rate-limited, and occasionally go down entirely. A production-ready consultancy:

- Isn't locked to a single provider. They should have experience with multiple model families and opinions about when to use each.

- Has migration strategies. When a model version is deprecated (and it will be), how fast can they move?

- Understands pricing models deeply. Token-based pricing, cached vs. uncached, batch vs. real-time — these details determine whether your system is economically viable at scale.

- Has dealt with provider outages. What's their fallback when the primary model API goes down at 2 AM?

6. Look for Infrastructure Opinions, Not Just AI Opinions

AI is only one layer of a production system. A consultancy that only knows AI but can't talk about deployment, scaling, security, and DevOps is going to hand you a model that works on their laptop but not in your cloud.

Red flags:

- They can't explain how they'd deploy to your cloud environment (AWS, GCP, Azure)

- They've never dealt with SOC 2, HIPAA, or GDPR in the context of AI systems

- They don't mention CI/CD for model and prompt deployments

- They have no opinion on vector databases, embedding strategies, or RAG architecture tradeoffs

Production AI is a systems engineering problem, not just a machine learning problem. If the team is all data scientists and no infrastructure engineers, that's a signal.

7. Check for Post-Launch Operational Thinking

Shipping v1 is the beginning, not the end. AI systems require ongoing care: models drift, data changes, user patterns evolve, and costs need continuous optimization. Ask:

- What does month 2 look like? Do they have a plan for ongoing optimization, or do they declare victory and move on?

- How do they handle prompt drift? As models update, prompts that worked perfectly can degrade. Is there a process for this?

- What's their incident response playbook? When (not if) the AI system produces a bad output in production, what happens? Who gets paged? What's the rollback process?

- Can they show you a runbook? Operational documentation for the systems they've built — not just code, but operational playbooks.

The Evaluation Checklist

Before signing with an AI consultancy, run them through these questions. Score each honestly:

| Question | Pass? |

|---|---|

| Can they describe specific production failures they've encountered and resolved? | ☐ |

| Do they have a documented evaluation and testing methodology for AI systems? | ☐ |

| Can they whiteboard a production architecture with guardrails, fallbacks, and monitoring? | ☐ |

| Do they have multi-model experience and provider migration strategies? | ☐ |

| Can they articulate unit economics (cost per AI transaction)? | ☐ |

| Do they have infrastructure and DevOps expertise, not just AI/ML? | ☐ |

| Is there a plan for post-launch operations, monitoring, and optimization? | ☐ |

| Have they dealt with compliance requirements (SOC 2, HIPAA, GDPR) in AI contexts? | ☐ |

If a consultancy can't check most of these boxes with specific, concrete examples — not vague assurances — keep looking.

How We Think About This at Last Rev

We've been building production systems for over a decade — complex web platforms, headless CMS architectures, high-traffic e-commerce sites. When we moved into AI, we brought that production mindset with us. We don't think of AI as a separate discipline; it's another layer in a production stack that needs the same rigor as everything else: CI/CD, monitoring, testing, incident response, and operational documentation.

Every AI system we build ships with guardrails, audit trails, cost controls, and eval suites. Not because we're paranoid — because we've seen what happens when you skip them. The prototype-to-production gap isn't closed by better models. It's closed by better engineering.

Key Takeaways

- Over 80% of AI projects fail to reach production — the consultancy you choose dramatically affects which side of that stat you land on.

- Ask about failures, not just successes. Production experience means battle scars.

- Architecture matters more than model choice. Guardrails, fallbacks, and monitoring are what separate production from prototype.

- Demand AI-specific testing and observability. Standard software testing isn't enough for non-deterministic systems.

- Evaluate the full stack. AI consultancies that can't talk infrastructure, DevOps, and compliance aren't ready for production.

- Plan for month 2 and beyond. Production AI requires continuous optimization, not a one-time delivery.